Description

Key Technical Specifications

- Product Model: RTX 4090

- Manufacturer: NVIDIA

- Architecture: Ada Lovelace

- GPU Memory: 24 GB GDDR6X, 384-bit bus, 1 TB/s bandwidth

- CUDA Cores: 16,384

- Boost Clock: Up to 2.52 GHz

- FP32 Performance: ~83 TFLOPS

- Tensor Core Performance (FP16): ~1,321 TOPS (with sparsity)

- Ray Tracing Performance: ~191 TFLOPS (RT Core throughput)

- Interface: PCIe Gen4 x16

- TDP: 450 W

- Power Connectors: 1× 12VHPWR (16-pin) or 3× 8-pin via adapter

- Display Outputs: 1× HDMI 2.1, 3× DisplayPort 1.4a

Core Performance and Selection Advantages

The NVIDIA RTX 4090 delivers a generational leap in compute and graphics performance compared to its predecessor (RTX 3090) and competing solutions. Built on the Ada Lovelace architecture, it offers approximately 2× the FP32 throughput and up to 4× the AI inference performance (via 4th-gen Tensor Cores with FP8 support). This makes it uniquely suited for projects that blend high-fidelity visualization with real-time data processing—such as digital twin implementations where live sensor data drives dynamic 3D models.

Key functional highlights include hardware-accelerated ray tracing for photorealistic rendering without CPU bottlenecks, and DLSS 3 with Frame Generation for smooth interactive simulation at 4K+ resolutions. For AI developers, the 24 GB of fast GDDR6X memory enables larger model prototyping on a single workstation, reducing dependency on cloud resources during early development phases.

From a total cost of ownership (TCO) perspective, the RTX 4090 reduces project costs by consolidating roles: one high-end workstation can replace multiple mid-tier systems for tasks like CFD post-processing, neural network validation, or virtual reality walkthroughs. Its support for NVIDIA RTX Enterprise drivers (when used with certified ISV applications) ensures long-term stability and compatibility, lowering IT support overhead. Additionally, integration with CUDA, cuDNN, and TensorRT accelerates time-to-deployment for custom AI pipelines.

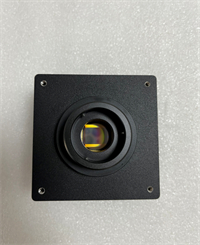

NVIDIA RTX 4090 HAND

System Integration and Expandability

The RTX 4090 integrates seamlessly into professional workstation ecosystems through widely adopted software and hardware standards. It is fully supported in NVIDIA’s unified development stack, including CUDA Toolkit, Nsight Systems, and Omniverse, and works with major engineering platforms such as ANSYS, Siemens NX, Dassault Systèmes SOLIDWORKS, and Autodesk Maya—especially when paired with NVIDIA RTX Enterprise drivers for certified stability.

In terms of networking and interoperability, the GPU supports high-speed data ingestion via PCIe Gen4 and can feed results to higher-level systems through standard protocols. While it does not natively host OPC UA or MQTT, it can be embedded in edge servers running Linux or Windows IoT that expose inference results via these protocols to SCADA or MES layers. For cloud hybrid workflows, NVIDIA Base Command and NGC containers simplify model portability between local RTX 4090 workstations and data center GPUs (e.g., A100/H100).

Expandability is primarily vertical rather than horizontal: due to its 450W TDP and physical size (typically triple-slot), multi-GPU configurations are uncommon in standard workstations. However, it scales effectively in distributed architectures—e.g., multiple RTX 4090 workstations can serve as dedicated nodes for different simulation or training tasks. The card supports up to four displays at 8K resolution, enabling immersive multi-screen control rooms or design review setups. For industrial deployment, system integrators should ensure adequate chassis airflow, redundant PSU capacity (≥1000W recommended), and compatibility with 12VHPWR cabling standards to avoid power delivery issues.

Tel:

Tel:  Email:

Email:  WhatsApp:

WhatsApp: